Functional testing always sounds simple when you explain it. Make sure the app works the way it should, check it off, and keep things moving.

But once you're actually doing it, especially in an enterprise setup, it rarely stays that clean.

You are not dealing with one clean workflow. You have multiple systems tied together, integrations that do not always behave the same way twice, and releases going out faster than most teams were originally built to handle. There is not really a clean testing phase anymore. It is just part of everything, all the time.

That is where functional testing tools for automation come in. At this point, most teams already have something in place.

The real question is not whether you are using automation. It is whether it is actually helping when things get a little unpredictable.

Why Automated Functional Testing Is Now a Baseline

At this point, automation is not something teams aim for. It is something they are expected to have.

With how fast development moves today:

- Releases happen more frequently

- Systems change mid-cycle

- Manual testing struggles to keep up

By the time a regression cycle finishes, parts of the application have already shifted.

That is why automated functional testing tools have become part of the day to day. They let teams keep validating critical workflows without slowing everything else down.

But this is also where things start to get a little messy.

Where Things Start to Break

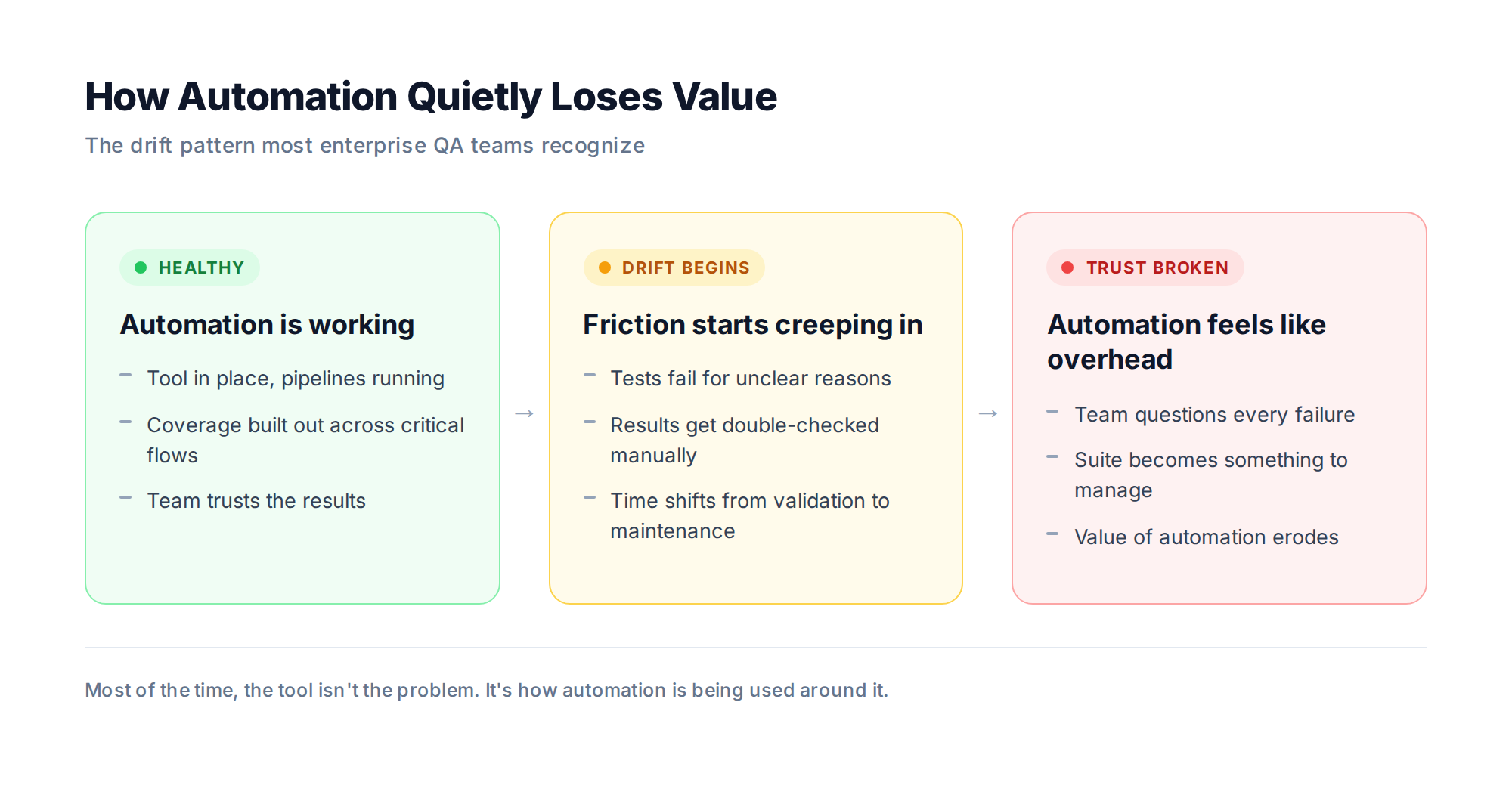

Most teams go through the same progression:

- Bring in a testing tool

- Build out automation coverage

- Expect consistency

Instead, they run into friction.

One test fails, then another. Before long, you are spending more time fixing tests than actually catching real issues. It is a slow shift, but once it happens, it is hard to ignore.

You start to see patterns like:

- Tests failing for unclear reasons

- Results getting double checked manually

- Time shifting from validation to maintenance

And over time, that changes how the team feels about automation.

Instead of trusting it, they start questioning it.

That is the turning point. When automation stops feeling like an advantage and starts feeling like something you have to manage.

And most of the time, that is not really about the tool. It is about how it is being used.

Why Enterprise Application Testing Feels Different

Enterprise testing is not just bigger. It is fundamentally different.

You are not validating isolated features. You are validating entire business processes that stretch across systems.

What looks like a simple action can trigger a lot behind the scenes:

- Services communicating

- Data moving across systems

- APIs firing

- External integrations kicking in

So when something breaks, it is not always obvious where it actually started.

And the stakes are higher.

A defect in an enterprise system can impact:

- Revenue

- Customer experience

- Compliance requirements

That is why enterprise application testing needs more than basic automation. It needs tools that can handle how systems behave in real conditions.

What we see on enterprise engagements

A single defect in a billing microservice can quietly affect reporting in a system two layers away. That is why our QA engagements with enterprise clients like HDFC Bank, ixigo, and JSW One usually start with mapping system dependencies before writing a single test. The tool is not what determines coverage. The testing strategy around it is.

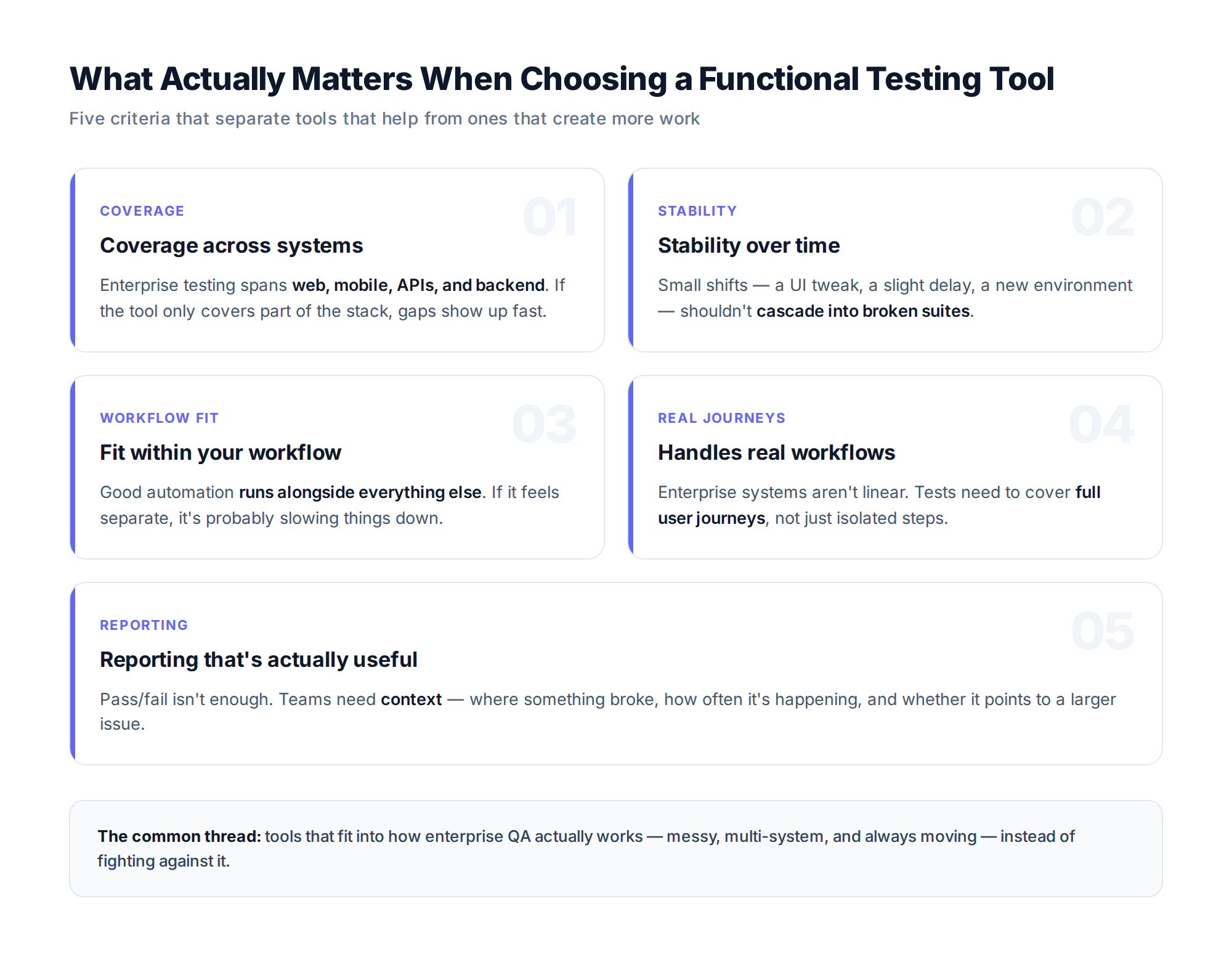

What Actually Matters When Choosing Functional Testing Tools

There is no shortage of tools that promise full coverage and easy automation. Most teams realize pretty quickly that it is not that simple.

A few things tend to separate the tools that help from the ones that create more work.

Coverage across systems matters first. Enterprise testing does not happen in one layer. You need visibility across web, mobile, APIs, and backend systems. If your tool only covers part of the stack, gaps show up quickly.

Stability over time is where things quietly fall apart. It is rarely one big issue. It is the small stuff. A UI tweak, a slight delay in load time, or running tests in a different environment can throw things off.

One failure leads to another, and before long you are spending more time fixing tests than using them. That is when automation starts to lose its value.

Fit within your workflow matters more than most teams expect. Good automation does not feel separate. It runs alongside everything else. If it feels like something you have to manage manually, it is probably slowing things down.

You also need tools that can handle real workflows. Enterprise systems are not linear. Testing needs to reflect that by covering full user journeys, not just isolated steps.

And finally, reporting needs to be useful. A simple pass or fail is not enough. Teams need context around where something broke, how often it is happening, and whether it points to a larger issue.

Common Mistakes Teams Still Make

Even with the right tools, certain patterns show up again and again.

- Leaning too heavily on UI tests, which are valuable but fragile

- Treating automation like a one-time setup instead of something that evolves

- Expecting tools to fix deeper workflow or collaboration issues

Most of the time, the challenge is not the tool. It is how everything around it is structured.

What Teams Do Differently When It Works

The teams that actually get value out of automated functional testing tools do not approach automation as something they need to complete.

They are more selective about where they invest their effort.

Instead of trying to automate everything, they focus on the parts of the system that really matter. The workflows users rely on every day, and the areas where something breaking would actually cause problems.

They also think in terms of reuse. Rather than rebuilding tests over and over, they create components that can be used across different scenarios. It keeps things manageable as the system grows.

There is usually a shift in where testing happens too. Instead of waiting for issues to show up in the UI, they catch things earlier at the API or integration level, before they have a chance to create noise later on.

And maybe the biggest difference is how they think about testing overall. It is not treated as something separate that happens after development. It is just part of how the product gets built and shipped. When that mindset changes, the tools tend to work a lot better too.

How Modern Platforms Are Changing the Approach

As systems grow more complex, traditional automation approaches are starting to show their limits.

Newer platforms are shifting things by:

- Adapting to changes instead of constantly breaking

- Expanding coverage without full rewrites

- Prioritizing execution based on what actually matters

For enterprise application testing, that shift makes a real difference.

Platforms like Qyrus are part of that evolution. By bringing testing across different layers into a single, coordinated workflow, they help teams manage complexity without adding more overhead.

The focus moves away from maintaining tests and toward maintaining confidence.

The Future of Functional Testing

Automation is not slowing down, but expectations are changing.

Teams are starting to expect more from their tools. Not just execution, but support:

- Helping identify what to test

- Adjusting as systems change

- Highlighting gaps before they become issues

That does not replace QA. It raises the bar.

Less time fixing scripts. More time thinking about coverage, risk, and how the system actually behaves.

At the same time, visibility is becoming critical. As systems connect in more ways, understanding how changes ripple across the application matters more than ever.

Final Thoughts

So if you take everything else away, it really comes down to this. Does the application actually work the way it should?

That part has not changed.

What has changed is everything around it. Systems are more connected, releases move faster, and there is a lot less room for things to go wrong without it being noticed.

Most teams already have functional testing tools for automation in place. That is not the hard part anymore.

What really matters is whether those tools are doing what they are supposed to do. Not just running tests but giving the team enough confidence to move forward without constantly second-guessing things.

When automation is in a good place, you do not really think about it. You are not stopping to question every failure or digging into logs trying to figure out if something is real. It runs, it catches what it needs to catch, and you trust it.

And that trust is what everything else depends on.

FAQs

What is automated functional testing?

Automated functional testing uses scripts or tools to verify that an application behaves the way it should. Instead of a tester manually clicking through each workflow, automated tests run those same checks on every release. The focus stays on what the application does, not how fast it runs or how secure it is.

Can functional testing be fully automated?

Not practically. Some things automate well. Login flows, CRUD operations, and payment processing have clear expected behavior, so scripts can validate them reliably. Others do not. Exploratory testing, usability feedback, and edge cases that have not been seen yet still need a person. Most mature QA teams aim for high automation on repetitive workflows and keep skilled manual testing in the loop for everything else.

What is the difference between functional testing and end-to-end testing?

Functional testing checks whether individual features work as expected. A single form, an API endpoint, a specific workflow. End-to-end testing validates the full chain of systems that make up a business process. You can have passing functional tests on each component and still have a broken end-to-end flow because of how the pieces interact.

How do you know when an automation suite is becoming a maintenance burden?

A few signs. Tests fail for reasons that have nothing to do with the application. Engineers start re-running failed tests to see if they pass the second time. Nobody trusts red builds enough to investigate them. When the team spends more time fixing tests than fixing bugs, the suite has drifted. Usually the root cause is design, not the tool itself.

Which functional testing tools work best for enterprise applications?

There is no universal answer. The right tool depends on your tech stack, how your team works, and how much framework maintenance you are willing to take on. The more honest question is whether the tool supports how your systems actually behave. Across layers, under load, and when integrations change. Not just whether it can execute a test case.

How much of your testing should be automated?

It depends on the system, but the common pattern is to automate workflows that run constantly and matter most if they break. Core user journeys, critical API contracts, and regressions on stable features. New features, edge cases, and anything requiring human judgment usually benefit from manual testing first, then get automated once the behavior stabilizes.

.svg)