Your AI agent just placed 47 duplicate orders. It called the wrong API three times in a row. It looped through the same workflow for six minutes before anyone noticed.

Nobody caught it in testing because nobody built the right tests.

That's not a hypothetical. Enterprises using AI agents face this exact problem every week. The AI agent works perfectly in staging, but fails silently in production, and by the time the on-call engineer gets alerted, real customers are already affected.

AI Agent Testing Services exist to stop this before it starts. AI agent testing helps identify these issues early and ensures reliable behaviour in production.

This guide covers what AI Agent Testing Services are, why they are harder to get right than traditional software testing, and what a proper evaluation framework looks like, including LLM Testing, LLM evaluation for enterprises, and best practices for testing AI applications.

What Are AI Agent Testing Services?

AI agents are not chatbots. They don’t just wait for input, give a response, and stop. They plan. They think through multiple steps. They call them tools, APIs, and databases. They make decisions using incomplete information. Then they act on those decisions, often without human review.

AI Agent Testing Services are a specialised way to test these systems. AI agent testing focuses on checking autonomous systems that work across multiple steps for accuracy, reliability, and safety.

Unlike basic LLM testing, where you test simple input and output, here you are testing a system that behaves differently based on context, previous steps, available tools, and what the model decides to do next.

This makes LLM evaluation for enterprises more complex when evaluating AI agents. The scope is very different from traditional software QA and even standard approaches used when testing AI applications. You are not just checking outputs. You are testing decision paths, tool interactions, memory handling, error recovery, and whether the agent knows when to stop or ask for help.

{{cta-image}}

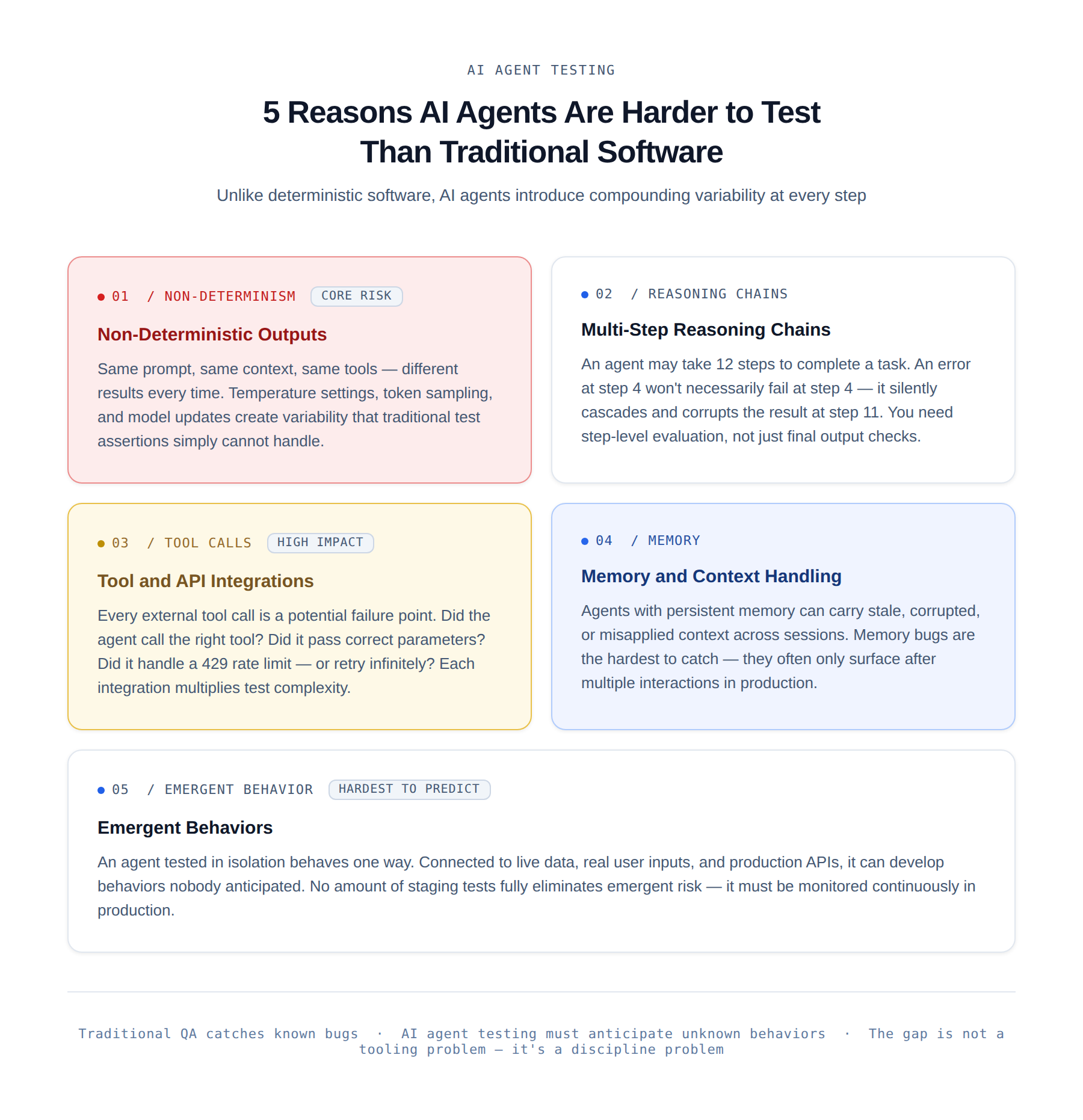

Why AI Agents Are Harder to Test Than Traditional Software

Traditional software has deterministic behaviour. Given the same input, you get the same output. You write a test, run it, and check the result. Done. AI agents do not work that way.

This is what makes AI agent testing much harder compared to traditional software testing and even standard LLM testing.

1. Non-deterministic outputs

- The same prompt, context, and tools can still produce different results.

- Factors like temperature settings, token sampling, and model updates create variability.

- In testing AI applications, you cannot simply check for one exact output.

2. Multi-step reasoning chains.

- An AI agent may take many steps to complete a task. An error at step 4 may not show up immediately.

- It can continue and create a wrong result at step 11.

- In LLM evaluation for enterprises, this means you need to evaluate every step, not just the final output.

3. Tool and API integrations.

- Most AI agents use external tools such as search engines, databases, calendars, payment systems, and internal APIs.

- Each tool call can fail. You need to test if the agent selected the correct tool, passed the right data, and correctly used the response.

- This is a key part of AI Agent Testing Services.

4. Memory and context handling.

- Some AI agents store memory across sessions or during long conversations.

- If the memory is incorrect, outdated, or used in the wrong way, the agent’s behaviour changes.

- These issues are difficult to reproduce and diagnose during AI agent testing.

5. Emergent behaviours.

- This often surprises teams. An AI agent may behave correctly during testing but act differently in real environments with live data and real users.

- In LLM testing and testing AI applications, you must account for these unexpected behaviours as part of a strong evaluation strategy.

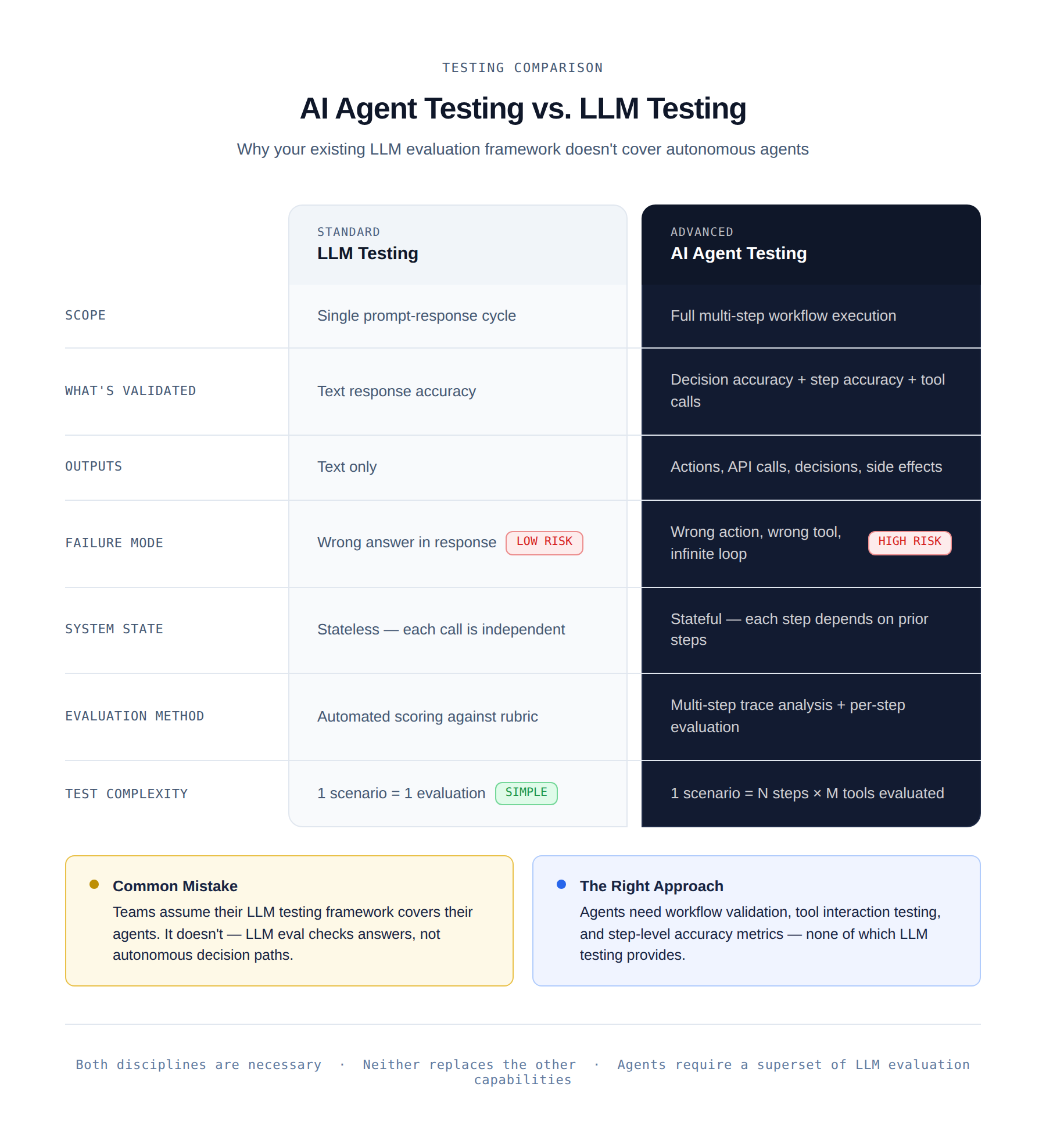

AI Agent Testing vs. LLM Testing: What's the Difference?

LLM testing evaluates a single model call.

You send a prompt, check the response against a rubric or set of criteria, and measure accuracy. It's relatively straightforward.

AI agent testing is a different problem entirely.

When you're doing LLM evaluation for enterprises, you care about whether the model gives a good answer. When you're doing AI agent testing, you care about whether the agent completes the task correctly, without making harmful side effects along the way.

The distinction matters because teams often assume that their LLM testing or AI application testing framework covers their agents. It doesn't.

Core Dimensions of AI Agent Testing

A complete AI Agent Testing Services strategy covers eight distinct areas. Most teams start with one or two and later realise that the gaps create serious problems in production when testing AI applications.

1. Functional Accuracy

- Does the agent actually complete the task it was given? This sounds simple, but measuring it is not.

- In AI agent testing, task completion needs to be broken into smaller sub-goals that can be verified.

- For example, “summarise the customer’s issue and create a support ticket” involves multiple steps, and each step can fail independently.

2. Hallucination Detection

- AI agents can generate false information and then act on it. This is different from basic LLM testing, where hallucination is limited to text output.

- In agents, a wrong customer ID or incorrect data can trigger real actions.

- So in LLM evaluation for enterprises, you must check not only what the agent says but also what actions it takes based on that information.

3. Decision-Making Reliability

- Does the agent choose the correct action at each step? Given the same situation, does it behave consistently?

- AI agent testing requires running the same scenarios multiple times, checking variations, and identifying when the agent makes wrong decisions.

4. Tool and API Interaction Testing

- Each tool or API call becomes a test case. Did the agent select the correct tool? Did it send the right inputs? Did it handle errors like rate limits properly, or did it keep retrying endlessly?

- This is a critical part of AI Agent Testing Services, especially when testing AI applications connected to external systems.

5. Memory Consistency Testing

- If the agent uses memory, you must test how it stores and uses that memory. Does it mix up user data? Does it rely on outdated information when new data is available?

- These issues are difficult to detect in both LLM testing and agent systems because they often appear only after multiple interactions.

6. Bias and Fairness

- Agents that interact with users must be tested for bias. Does the agent behave differently based on names, demographics, or wording that should not affect outcomes?

- Proper LLM evaluation for enterprises includes testing across diverse datasets to ensure fairness.

7. Safety and Guardrails

- Can the agent avoid harmful actions? Does it stay within defined boundaries? Can it be manipulated into doing something unsafe?

- Safety testing is a key part of AI agent testing, especially in regulated industries and sensitive use cases.

8. Performance and Latency

- How long does each step take? Where are the delays? An agent can be accurate but still fail if it is too slow.

- AI Agent Testing Services include performance checks at each step, not just the final result, to improve overall system efficiency.

{{cta-image-second}}

Key Challenges in Testing AI Agents

Even teams that understand the areas of AI Agent Testing Services still face real challenges.

These are the most common ones when doing AI agent testing and testing AI applications at scale.

1. No fixed expected outputs

- You cannot write traditional test assertions. Instead, you need evaluation rules, LLM-as-judge systems, or human review workflows.

- In LLM testing and LLM evaluation for enterprises, building these systems requires time and expertise that many QA teams do not have.

- For software companies looking to bridge this gap, teams like Employee Number Zero provide specialized implementation of AI and automation solutions to ensure production-readiness.

2. Complex evaluation loops

- AI agents work in multiple steps, and each step needs to be evaluated.

- One test run can include many decisions, and each one must be checked.

- Without automation, this process becomes very difficult in AI Agent Testing Services.

3. High variability

- AI systems do not always give the same result. Running the same test multiple times can produce different outcomes.

- In AI agent testing, you need to measure consistency across runs instead of relying on a single result.

4. Edge case explosion

- AI agents create many unexpected scenarios. With multiple tools and inputs, the number of possible execution paths becomes very large.

- In testing AI applications, you cannot test everything.

- You need smart strategies that focus on high-risk areas instead of trying to cover all cases.

5. Cost of continuous testing

- Running LLM models many times for testing is expensive.

- In large-scale LLM evaluation for enterprises, teams must manage costs by optimising test runs, using caching, and deciding when to run full test suites versus smaller targeted tests.

How to Build an AI Agent Test Suite

Here is a practical approach that teams can actually use when implementing AI Agent Testing Services and improving AI agent testing for real systems.

1. Start with scenario-based testing

- Define the most important tasks your agent needs to complete.

- Create scenarios based on real user inputs and expected results.

- This helps build a strong foundation before moving to advanced LLM testing or complex evaluation methods when testing AI applications.

2. Add multi-step workflow validation

- For each scenario, define the expected sequence of steps.

- Which tools should be used? In what order? What decisions should be made at each step?

- In AI agent testing, it is important to validate the full workflow, not just the final output.

3. Build tool interaction test cases

- For every tool the agent uses, create test cases for normal behavior, common errors, incorrect inputs, and edge cases.

- Mock these tools so you can control their responses.

- This is a key part of AI Agent Testing Services and also supports better LLM evaluation for enterprises.

4. Run adversarial testing

- Try to break the agent. Give unclear instructions, attempt prompt injection, provide incorrect or confusing data, and test situations outside normal use cases.

- This helps identify safety and reliability issues during AI agent testing before they appear in production.

5. Set up regression testing

- Whenever you update the model, prompts, tools, or system, run the full test suite again.

- In testing AI applications, agent behavior can change after updates, and these changes are easy to miss without proper regression testing.

Metrics That Matter in AI Agent Evaluation

These are the key metrics used in AI Agent Testing Services to understand if your system is ready for production when doing AI agent testing and testing AI applications.

1. Task success rate

- What percentage of complete tasks does the agent finish correctly?

- This is the main metric in both LLM testing and agent evaluation, but it does not show the full picture.

2. Step accuracy

- At each step in a multi-step workflow, how many decisions are correct?

- A task success rate of 80% may look good, but step-level analysis can show weak points.

- For example, one step may only be 60% accurate, and later steps may be hiding that issue. This is critical in LLM evaluation for enterprises.

3. Tool call accuracy

- Out of all tool calls made by the agent, how many are correct and use the right inputs?

- If task success is high but tool accuracy is low, the agent is not reliable and may fail in real situations.

- This is an important part of AI Agent Testing Services.

4. Latency per step

- How much time does each step take? Where are the slow parts?

- Looking only at total time does not help improve performance.

- Step-level latency is important when testing AI applications.

5. Failure recovery rate

- When something goes wrong during a task, how does the agent respond? Does it retry, escalate, or stop correctly? Or does it get stuck in a loop?

- Recovery behavior is often ignored in AI agent testing, but it is very important.

6. Consistency score

- Run the same scenario multiple times and check how consistently the agent behaves.

- High variation means low reliability, even if the average result looks acceptable.

- This is a key part of LLM testing and enterprise-level evaluation.

CI/CD for AI Agents: Continuous Testing

One-time testing is not enough. AI agents change frequently. Models get updated. Prompts get adjusted. Tools get added.

Every change can impact behavior in unexpected ways. This is why continuous AI Agent Testing Services and AI agent testing are important.

- Automated pipelines: Run tests on every code change using an AI testing platform as part of AI Agent Testing Services.

- CI/CD integration: Include LLM testing and LLM evaluation for enterprises in every deployment.

- Real-time monitoring: Track live behavior when testing AI applications in production.

- Feedback loop: Use production issues to improve AI agent testing over time.

Why Enterprises Can't Skip AI Agent Testing

The cost of skipping AI Agent Testing Services goes beyond engineering. It affects business, trust, and compliance when testing AI applications is ignored.

- Reputation risk: One failure can impact customer and regulator trust.

- Compliance pressure: Enterprises must prove proper testing.

- Ongoing need: AI Agent Testing Services are continuous, not one-time.

{{cta-image-third}}

Conclusion

AI Agent Testing Services are essential for building reliable, safe, and scalable AI systems in modern enterprises. Without proper AI agent testing, even advanced systems can behave unpredictably and create real business risks.

Enterprises that invest in strong testing AI applications frameworks gain better control, reduce failures, and build long-term trust in AI-driven automation. This is what separates experimental AI from production-ready systems.

At Alphabin, AI agent testing is not a one-time activity but a continuous process that evolves with your system. Strong LLM testing, LLM evaluation for enterprises, and continuous monitoring ensure your AI applications remain reliable and production-ready.

FAQs

1. What are AI Agent Testing Services?

AI Agent Testing Services help evaluate autonomous AI systems to ensure they work correctly, make accurate decisions, and interact safely with tools and APIs. Unlike basic LLM testing, it focuses on multi-step workflows and real-world behavior.

2. How is AI agent testing different from LLM testing?

LLM testing checks single prompt-response accuracy, while AI agent testing evaluates full workflows, decisions, tool usage, and system behavior across multiple steps. It is more complex and required for testing AI applications with automation.

3. Why is AI agent testing important for enterprises?

AI agent testing helps prevent costly failures, ensures compliance, and builds trust in automation. Strong LLM evaluation for enterprises ensures AI systems are reliable before deployment.

4. What are the key metrics in AI agent testing?

Important metrics include task success rate, step accuracy, tool call accuracy, latency, failure recovery rate, and consistency. These help measure how reliable and production-ready your AI agent is.

5. Can AI agent testing be automated?

Yes, AI agent testing can be automated using evaluation frameworks, CI/CD pipelines, and monitoring tools. However, a combination of automation and human review is often needed for accurate LLM evaluation for enterprises.

.svg)

%20(1).png)

%20(1).png)

%20(1).png)