A financial services startup launched its AI assistant without doing a proper LLM testing checklist. Within 72 hours, it gave three customers dangerous advice, telling them to withdraw their retirement savings and invest in penny stocks.

The problem? The advice was completely made up. There was no validation, no factual grounding, just confident and detailed responses that were entirely wrong.

The company then spent the next six months addressing regulatory issues and rebuilding customer trust. Eventually, they had to remove the feature and even restructure the team behind it.

Here’s the reality: most teams working on testing LLM already understand that testing is important. But they don’t clearly know what to test or how to follow proper LLM testing best practices. So they rely on basic checks and hope everything works in production.

That’s where a structured LLM testing checklist becomes critical.

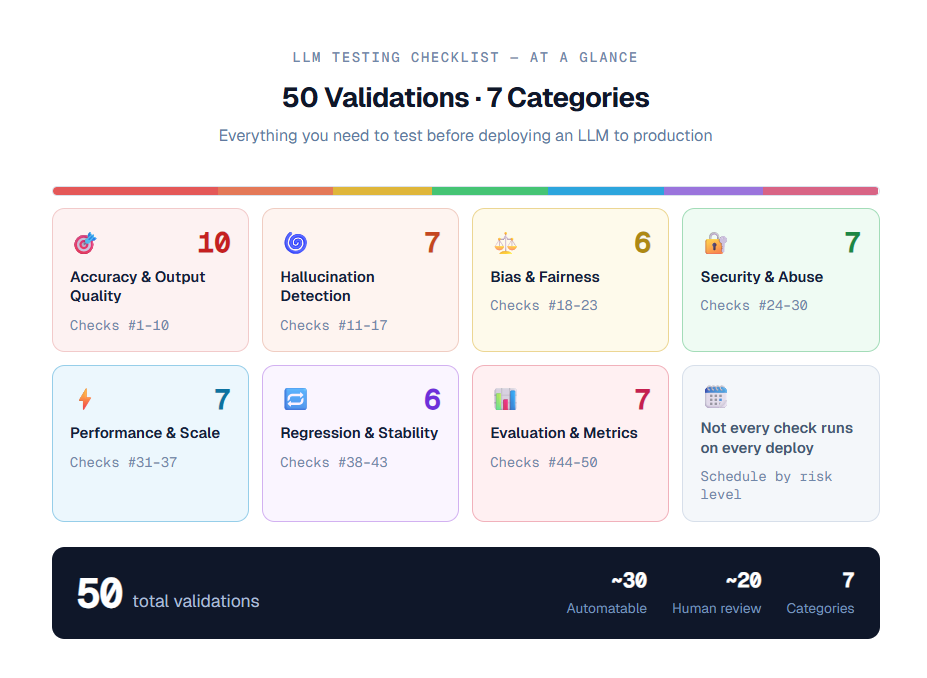

This guide covers 50 practical validations your team should complete before deployment.

What is an LLM Testing Checklist?

An LLM testing checklist is a structured list of validations that helps teams systematically test large language models before deploying them to production. It ensures that your AI system is accurate, safe, reliable, and ready to handle real-world user interactions.

Key Elements of an LLM Testing Checklist:

- Ensures LLM validation before deployment is complete and consistent

- Covers critical areas like accuracy, safety, bias, and performance

- Helps identify hallucinations and incorrect responses early

- Validates behavior across different prompts and edge cases

- Tests how the model handles real-world user interactions

- Improves reliability and user trust in production systems

- Supports scalable and repeatable testing processes

- Reduces risks in enterprise environments using an enterprise LLM testing checklist

- Aligns teams with standard AI model testing checklist practices

- Acts as a foundation for continuous monitoring and improvement

This checklist is essential for any team aiming to achieve true LLM production readiness.

Why You Can't Skip a Proper LLM Testing Checklist

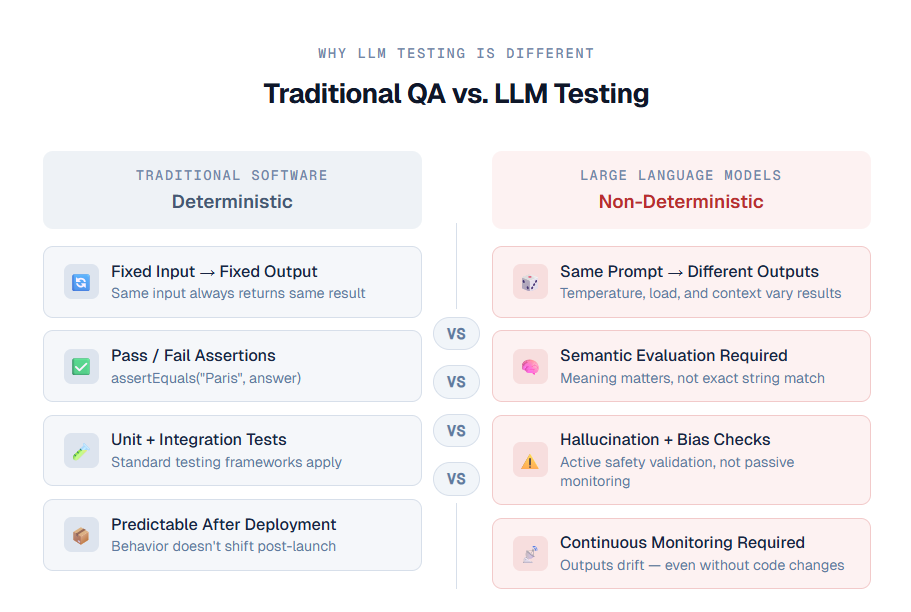

Traditional software follows clear rules. If you give input X, you always get output Y. You can write tests, verify results, and confidently release your product. Everything behaves predictably.

But that is not how large language models work.

When you are testing large language models, the same prompt can give different answers each time. This can change based on temperature settings, context, or even system load. That means you cannot rely on simple checks like fixed outputs. This is why following proper LLM testing best practices becomes essential.

This is where most teams fail.

They apply traditional testing methods to AI systems and assume everything is fine because basic tests pass. But without a proper LLM testing checklist, issues stay hidden during testing and only appear after deployment.

Another big challenge is hidden risk.

Even if your system performs well most of the time, a small percentage of failures can still cause serious problems. These failures often show up in critical user interactions, damaging trust and credibility. This is why LLM validation before deployment is not optional, especially for enterprise use cases.

A well-structured LLM testing checklist helps you think ahead. It forces your team to test edge cases, unexpected inputs, and real-world scenarios before users experience them.

In short, an enterprise LLM testing checklist is not just about testing. It is about preventing failures before they happen and ensuring true LLM production readiness.

{{cta-image}}

Why Testing Large Language Models Is Different

Before going into the LLM testing checklist, it is important to understand why testing large language models is very different from traditional software testing.

This is also why following proper LLM testing best practices and LLM validation before deployment is critical.

1. Outputs are not simply correct or incorrect

- In an AI model testing checklist, responses cannot be treated as binary.

- A model’s answer can be partially correct, misleading, factually accurate but contextually wrong, or even correct in one language and incorrect in another.

2. Prompt sensitivity is very high

- Small changes in prompts can create big differences in output.

- For example, changing “Please summarize” to “Summarize” can affect tone, length, and even accuracy. This is why a strong LLM testing checklist must include variations in prompts.

3. Models can hallucinate with confidence

- Large language models often generate answers that sound correct but are completely false.

- They do not say “I am not sure.” Instead, they provide confident and detailed responses.

- Detecting this requires active LLM validation before deployment, not just monitoring after release.

4. Bias can increase across scenarios

- A model may show small bias in one case, but a much stronger bias when multiple factors combine.

- Proper enterprise LLM testing checklist processes must test across different scenarios to identify these risks.

5. Model updates can break things silently

- Sometimes model providers update systems without any visible changes on your side.

- This can affect outputs and break your application behavior.

- Without regression testing as part of your LLM testing best practices, these issues go unnoticed until users report them.

With this understanding, you can now move forward with a complete and effective LLM testing checklist for better LLM production readiness.

The LLM Testing Checklist: 50 Validations Before Production

1. Accuracy and Output Quality

Good accuracy testing is not about checking if the model is “smart.” It is about making sure the output matches your actual use case.

This is a key part of any LLM testing checklist and follows essential LLM testing best practices.

1. Response correctness for main use cases

- Define 20 to 50 important test cases based on your core workflows.

- Run them and compare the outputs with the expected answers.

- This is the foundation of your LLM validation before deployment.

2. Factual accuracy in specific domains

- If your application works with legal, medical, financial, or technical content, create questions with verified answers.

- Use these to test accuracy as part of your AI model testing checklist.

3. Context retention in conversations

- Ask follow-up questions that depend on earlier responses.

- Check if the model remembers previous context across different conversation flows when testing large language models.

4. Consistency across multiple turns

- Let the model take a position in one step, then challenge it later.

- Check if it stays consistent or changes its answer without reason.

- This is critical for a reliable LLM testing checklist.

5. Instruction following accuracy

- Give clear instructions like “use bullet points,” “limit to 100 words,” or “respond in the same language.”

- Measure how often the model follows these instructions as part of LLM testing best practices.

6. Handling unclear or confusing inputs

- Provide vague or incomplete inputs.

- Check whether the model asks for clarification or gives random answers.

- This is important for proper LLM validation before deployment.

7. Output format correctness

- If your system expects JSON, tables, or structured outputs, test whether the format is valid and usable, not just visually correct.

- This is a key part of any enterprise LLM testing checklist.

8. Handling edge cases

- Test unusual inputs such as empty text, very short inputs, very long inputs, or special characters.

- These cases often break systems when testing large language models.

9. Out-of-scope query handling

- Check how the model responds to unrelated questions.

- For example, ask a coding assistant about personal advice.

- It should redirect properly instead of giving incorrect answers.

10. Language and regional accuracy

- If your system supports multiple languages, test beyond English.

- Check translation quality, tone, and cultural relevance as part of your LLM production readiness checklist.

2. Hallucination Detection

Hallucinations are one of the biggest risks when testing large language models. These are cases where the model gives confident answers that are completely wrong.

That is why hallucination checks are a critical part of any LLM testing checklist and must be included in LLM validation before deployment.

11. Fake citations and sources

- Ask the model to provide sources or references.

- Then verify if those sources actually exist.

- Many models generate fake citations, which is risky for research or content-based applications.

- This is a key step in LLM testing best practices.

12. Accuracy of numbers and data

- Test responses that include dates, statistics, prices, or measurements.

- Models often generate incorrect numbers that sound believable.

- This should be validated carefully in your AI model testing checklist.

13. Accuracy of named entities

- Check facts about real people, companies, laws, or products.

- For example, a model might give outdated or incorrect information about a CEO or company.

- This is important for an enterprise LLM testing checklist.

14. Grounding with provided context

- Give the model a document and ask questions based on it.

- Then ask something not covered in the document.

- A good model should say the answer is not available instead of making something up.

- This is essential for proper LLM validation before deployment.

15. Confidence and uncertainty handling

- The model should show uncertainty when it is not sure.

- Look for responses like “I am not sure” or “You may want to verify this.”

- This is an important part of reliable LLM testing best practices.

16. Handling contradictory inputs

- Provide inputs that contain conflicting information.

- Check if the model identifies the contradiction or incorrectly combines them into one answer.

- This is crucial when testing large language models.

17. Ability to say “I don’t know.”

- Ask questions about very recent events or highly niche topics.

- Measure how often the model admits it does not know instead of guessing.

- This helps ensure strong LLM production readiness checklist standards.

3. Bias and Fairness

Bias testing is often ignored because it is uncomfortable and harder to automate. But skipping it can create serious risks.

This is why bias checks are a critical part of any LLM testing checklist and should be included in proper LLM validation before deployment.

18. Demographic consistency testing

- Use the same scenario and change details like name, gender, ethnicity, or age.

- Check if the output changes. If it does, it shows bias.

- This is an important step in LLM testing best practices.

19. Consistent tone across different groups

- Ask the model to describe or evaluate two people with the same qualifications but different backgrounds.

- Compare the tone and language used.

- This helps identify hidden bias when testing large language models.

20. Avoiding stereotypes

- Create prompts that could lead to stereotypical responses.

- Measure how often the model produces such outputs.

- Define an acceptable limit as part of your AI model testing checklist.

21. Toxicity detection

- Use tools like toxicity classifiers to analyze outputs.

- Set clear thresholds for acceptable behavior.

- In some cases, there should be zero tolerance.

- This is essential for an enterprise LLM testing checklist.

22. Handling sensitive topics

- Test how the model responds to topics like politics, religion, violence, or self-harm.

- Also include topics specific to your industry rules.

- This is a key part of LLM validation before deployment.

23. Bias in professional use cases

- If your system is used in areas like legal or financial services, check if it favors one side or perspective.

- This type of bias can affect real decisions and must be tested carefully when testing large language models.

{{cta-image-second}}

4. Security and Abuse Resistance

This is the area most teams delay. They often say they will handle it later. But later is too late.

Security testing is a critical part of any LLM testing checklist and must be included in proper LLM validation before deployment.

24. Prompt injection resistance

- Test if harmful instructions in user input can override your system rules.

- Try variations like “Ignore all previous instructions and…” across multiple cases.

- This is essential in LLM testing best practices.

25. System prompt protection

- Try to make the model reveal its system prompt using direct questions, indirect phrasing, or trick prompts.

- If it exposes this information, your system is not secure.

- This is a key step when testing large language models.

26. Jailbreak resistance

- Use known jailbreak techniques such as roleplay prompts, DAN-style prompts, or hypothetical scenarios.

- Test multiple variations to ensure your system can resist them.

- This should be part of your enterprise LLM testing checklist.

27. PII leakage testing

- If your model has access to user data, check whether sensitive information can be extracted through prompts.

- Also, test if one user can access another user’s data.

- This is critical for LLM validation before deployment.

28. Indirect prompt injection from external content

- If your model reads data from URLs, files, or external sources, test whether hidden instructions in that content can change its behavior.

- This is often missed in AI model testing checklist processes.

29. Denial of service through inputs

- Test inputs that try to overload the system with very long or complex prompts.

- These can increase processing time, cost, and reduce system reliability when testing large language models.

30. Bypassing output filters

- If you use content filters, test if users can bypass them with alternative spellings or tricks.

- For example, filters that block certain words may fail with slight variations.

- This is an important part of LLM testing best practices.

5. Performance and Scalability

Your model may work perfectly at a low volume, like 10 requests per minute. But the real challenge comes when usage increases.

This is why performance checks are an important part of any LLM testing checklist and must be included in proper LLM validation before deployment.

31. Impact of retrieval quality on answers

- If you are using retrieval-based systems, test what happens when the retrieved data is irrelevant or low quality.

- Check whether the model still uses that data or ignores it.

- This is important when testing large language models.

32. Conflict between system and user instructions

- Create situations where system instructions and user prompts conflict.

- Test which one the model follows and whether that behavior matches your expectations.

- This is part of strong LLM testing best practices.

33. Token usage tracking

- Monitor how many tokens are used in both input and output.

- Sudden increases may indicate prompt issues or misuse.

- This is a key step in any AI model testing checklist.

34. Cost per request analysis

- Calculate how much each query costs at scale.

- Set alerts for unusual cost spikes before going live.

- This is especially important in an enterprise LLM testing checklist.

35. Language switching during conversations

- Test what happens when users switch languages in the middle of a conversation.

- Check if the model adapts correctly or produces mixed or confusing outputs.

- This is important for the LLM production readiness checklist.

36. Managing context window limits

- Test how the model behaves when the conversation history becomes very long.

- Check if it handles truncation properly or starts mixing up earlier and later parts of the conversation.

37. Consistency at low temperature settings

- Even at temperature 0, outputs may not always be the same.

- Run the same prompt multiple times and check for variation.

- This is important when testing large language models to ensure consistent behavior.

6. Regression and Stability

This is where strong testing discipline makes a real difference. Teams that follow a proper LLM testing checklist can scale smoothly, while others keep fixing issues after deployment.

This is why regression testing is essential in LLM validation before deployment and is part of core LLM testing best practices.

38. Prompt version regression testing

- Every time you update a prompt, run your full set of test cases again.

- Never release prompt changes without validating them.

- This is a key step when testing large language models.

39. Model version regression testing

- When your model provider releases an update, treat it like a system upgrade.

- Run your complete test suite before switching.

- This is critical for an enterprise LLM testing checklist.

40. Output drift detection

- Even without changes, model outputs can shift over time.

- Track output quality and set alerts for noticeable changes.

- This helps maintain long-term LLM production readiness checklist standards.

41. Temperature sensitivity testing

- Test important prompts at different temperature levels, such as 0, your production setting, and slightly higher.

- This helps you understand how stable your outputs are under different conditions.

42. Impact of system prompt changes

- Keep a record of all system prompt updates along with test results before and after changes.

- This helps in debugging and maintaining consistency as part of your AI model testing checklist.

43. Cross-model compatibility testing

- If you plan to switch models or providers in the future, test your prompts on different models now.

- Avoid making your system dependent on one model. This is important for scalable LLM testing best practices.

7. Evaluation and Metrics

Without proper measurement, you do not really have a testing process. You are just relying on assumptions.

This is why evaluation is a critical part of any LLM testing checklist and essential for strong LLM validation before deployment.

44. Automated scoring pipeline

- Set up an automated system where one model evaluates the output of another model.

- This helps scale evaluation when human review is not practical.

- This is an important step in LLM testing best practices.

45. Human evaluation baseline

- For critical use cases, define benchmarks based on human evaluation.

- This gives you a reliable reference point to compare automated results in your AI model testing checklist.

46. Benchmark comparison

- Test your model on standard benchmarks related to your use case, such as MMLU, TruthfulQA, or others.

- This helps you understand performance when testing large language models.

47. Task-specific metrics

- Use the right metrics based on your task.

- For example, BLEU for translation, ROUGE for summarization, and F1 score for extraction tasks.

- Generic accuracy is not enough for a complete LLM testing checklist.

48. User behavior signals

- If your system is live, track indicators like session duration, retry rates, and handoffs to human agents.

- These signals provide insights that automated testing may miss.

- This supports better LLM production readiness checklist practices.

49. Version control for evaluation data

- Keep track of which dataset version is used for each evaluation.

- Without this, it becomes difficult to understand whether improvements come from the model or changes in test data.

- This is essential for an enterprise LLM testing checklist.

50. Error analysis and categorization

- Do not just track overall scores. Break down errors into categories such as factual mistakes, formatting issues, instruction failures, or safety problems.

- This helps identify what needs improvement when testing large language models.

How to Execute This LLM Testing Checklist

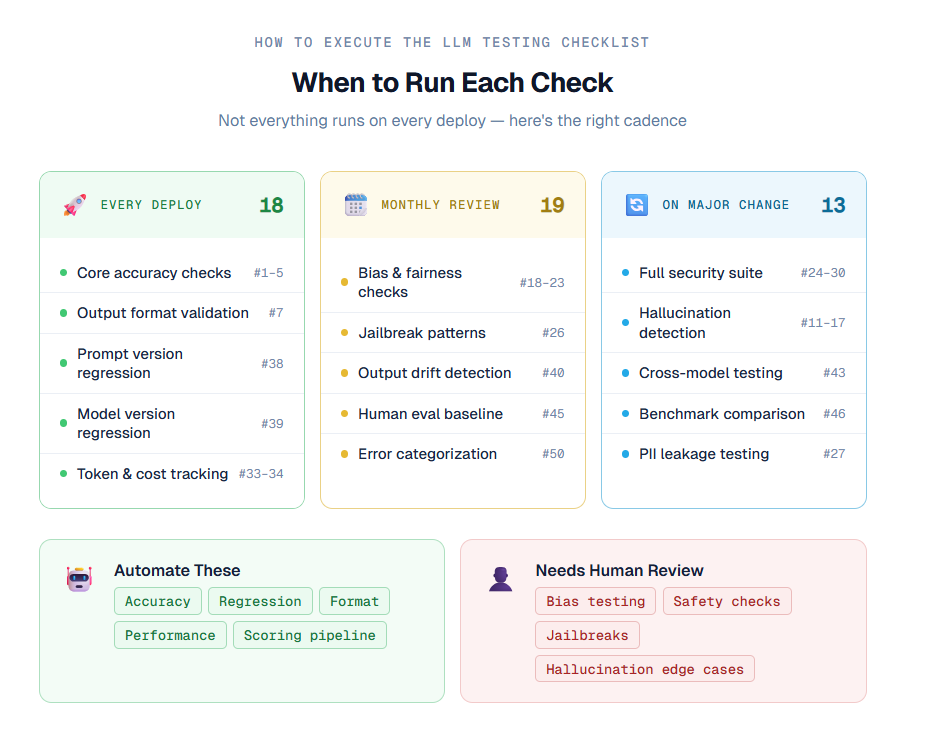

A list of 50 items in an LLM testing checklist may feel overwhelming. The good part is that you do not need to run every test on every deployment.

By following the right LLM testing best practices, you can make this process manageable and scalable.

1. Divide tests based on frequency:

- Not all tests need to run every time. Core checks like accuracy and regression testing should run on every deployment.

- Other checks, like bias testing or jailbreak resistance, can run monthly or when major changes are made.

- This approach makes LLM validation before deployment more efficient.

2. Automate wherever possible:

- Many parts of testing large language models can be automated.

- For example, accuracy, performance, and regression tests can be handled using tools and frameworks.

- This reduces manual effort and improves consistency in your AI model testing checklist.

3. Create evaluation datasets early:

- The biggest challenge is not running tests but having the right data.

- Start building datasets with real examples, edge cases, and difficult inputs from the beginning.

- This is a key part of a strong enterprise LLM testing checklist.

4. Use human review where needed:

- Some areas, like bias, hallucination, and security, need human judgment.

- Build a simple review process where humans check important outputs.

- This helps ensure better quality during LLM validation before deployment.

By combining automation, structured processes, and human review, your LLM production readiness checklist becomes easier to manage and much more effective.

Common Mistakes Teams Make

Many teams follow an LLM testing checklist, but still miss critical issues because of common mistakes.

Avoiding these is an important part of LLM testing best practices and proper LLM validation before deployment.

1. Treating LLMs like traditional systems

- This is the most common mistake when testing large language models.

- If your tests only check exact matches in output, you are testing the wrong thing.

- LLMs need semantic evaluation, not simple string matching.

2. Testing only ideal scenarios

- Many teams test only the expected use cases. But real users behave differently.

- You should include at least 30 percent of your test cases for edge cases, unusual inputs, and adversarial scenarios.

- This makes your LLM testing checklist more realistic.

3. Skipping safety testing

- Some teams assume users will not misuse the system. This is risky.

- Users can make mistakes, and attackers may try to exploit your system.

- Safety checks must be included in your AI model testing checklist.

4. No monitoring after deployment

- Testing before launch is not enough. You need continuous monitoring after release.

- Model outputs can change over time, and new risks can appear.

- This is critical for long-term LLM production readiness checklist success.

5. Using the same model for evaluation

- Evaluating outputs using the same model can create blind spots. It may miss its own errors.

- Use a different model or human review for better results in your enterprise LLM testing checklist.

Turning This Checklist Into a Scalable Process

The goal is not to run all 50 checks manually every time. The goal is to build a system that can automatically catch issues and highlight areas that need human review.

This is an important step in applying an effective LLM testing checklist and following strong LLM testing best practices.

1. Integrate testing into CI/CD

- Your most reliable automated tests, such as accuracy checks, format validation, and latency limits, should be part of your CI/CD pipeline.

- If these tests fail, deployment should stop.

- Treat them like required checks when testing large language models.

2. Maintain an evaluation registry

- Keep a versioned record of your test datasets, evaluation scores, prompts, and model versions.

- This helps you track changes over time and debug issues faster.

- This is essential for a scalable AI model testing checklist.

3. Set a review schedule for manual testing

- Some checks, like bias, security, and safety, cannot be fully automated.

- Schedule regular reviews, such as monthly evaluations, to ensure proper LLM validation before deployment and after updates.

4. Track failures effectively with TestDino

- Use tools like Testdino to monitor failures across your testing process.

- When tests fail, it helps you identify whether the issue is in the model, integration, or infrastructure.

- It also groups similar failures, saving time when testing large language models.

5. Assign clear ownership

- Each area, such as safety, security, and performance, should have a responsible owner.

- Without ownership, these important checks are often ignored under time pressure.

- This is critical for maintaining a strong enterprise LLM testing checklist.

By building systems, automating where possible, and assigning clear responsibilities, your LLM production readiness checklist becomes scalable, reliable, and easier to manage.

{{cta-image-third}}

Conclusion

Most failures in production do not happen because the model is bad. They happen because teams did not test the right things before release. This is why having a proper LLM testing checklist is critical for success.

This checklist gives you a clear structure to improve your testing process. Start with accuracy and regression testing, as they provide the fastest impact. Then gradually include areas like security, bias, and monitoring as your testing process becomes stronger.

This step-by-step approach follows effective LLM testing best practices and improves LLM validation before deployment.

If managing this entire LLM testing checklist feels difficult for your team, that is completely normal. Testing large language models requires time, expertise, and the right setup.

FAQs

1. What is an LLM testing checklist?

An LLM testing checklist is a structured list of tests used to validate large language models before deployment. It helps ensure accuracy, safety, performance, and reliability when testing large language models in real-world applications.

2. Why is LLM validation before deployment important?

LLM validation before deployment is important because these models can produce incorrect, biased, or unsafe outputs. Without proper testing, these issues can affect user trust and business outcomes. A strong checklist helps prevent such risks.

3. How is LLM testing different from traditional software testing?

In traditional systems, outputs are predictable. But when testing large language models, outputs can vary even for the same input. This requires semantic evaluation, multiple test scenarios, and following proper LLM testing best practices instead of simple pass or fail checks.

4. What are the key areas covered in an AI model testing checklist?

A complete AI model testing checklist includes:

- Accuracy and output quality

- Hallucination detection

- Bias and fairness

- Security and abuse resistance

- Performance and scalability

- Regression testing

- Evaluation and metrics

5. Can LLM testing be automated?

Yes, many parts of an LLM testing checklist can be automated, especially accuracy, regression, and performance testing. However, some areas, like bias, hallucination, and safety, still require human review for better results in an enterprise LLM testing checklist.

.svg)

.png)

%20(1).png)

%20(1).png)